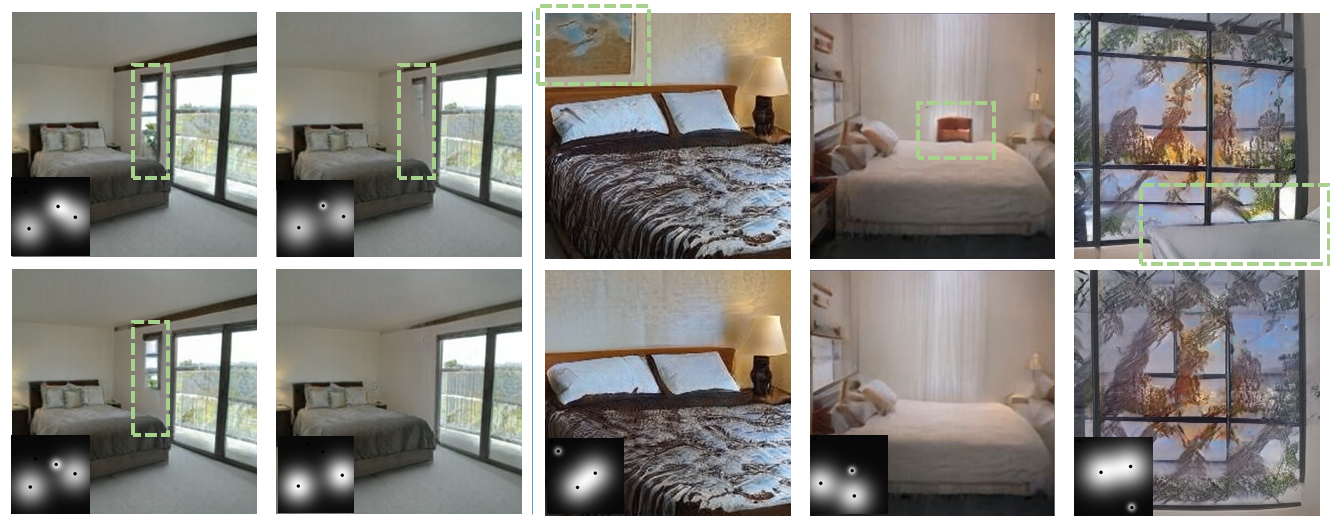

Below, we present samples generated by the SpacialGAN model, showcasing manipulations of Multi-Object Indoor Scenes from the LSUN Bedroom dataset.

In this example, we showcase the rearrangement of objects using sub-heatmap manipulation. The yellow arrows indicate the movement of objects like windows and beds, enhancing overall coherence.

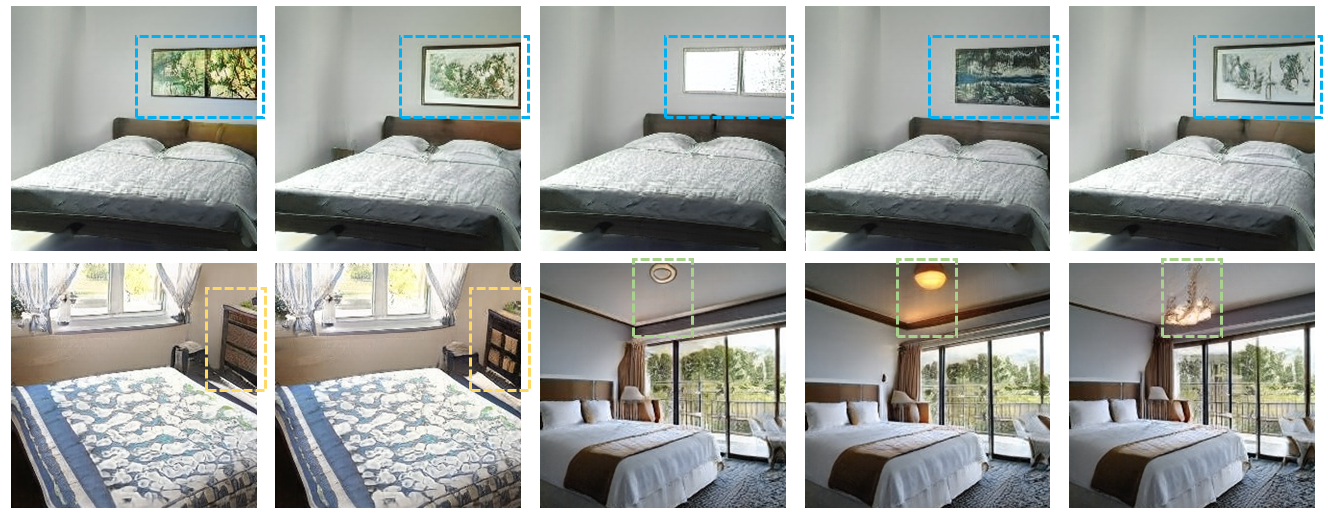

Then, we demonstrate the gradual removal of objects by eliminating their associated sub-heatmaps. Elements such as windows and lamps are gradually removed, while the background and other objects remain mostly unchanged.

Next, we explore the alteration of local regions by applying unique style codes to individual sub-heatmaps. This process enables a variety of changes, including variations in paintings, windows, and light types, as denoted by the blue and green boxes.