The Chinese University of Hong Kong

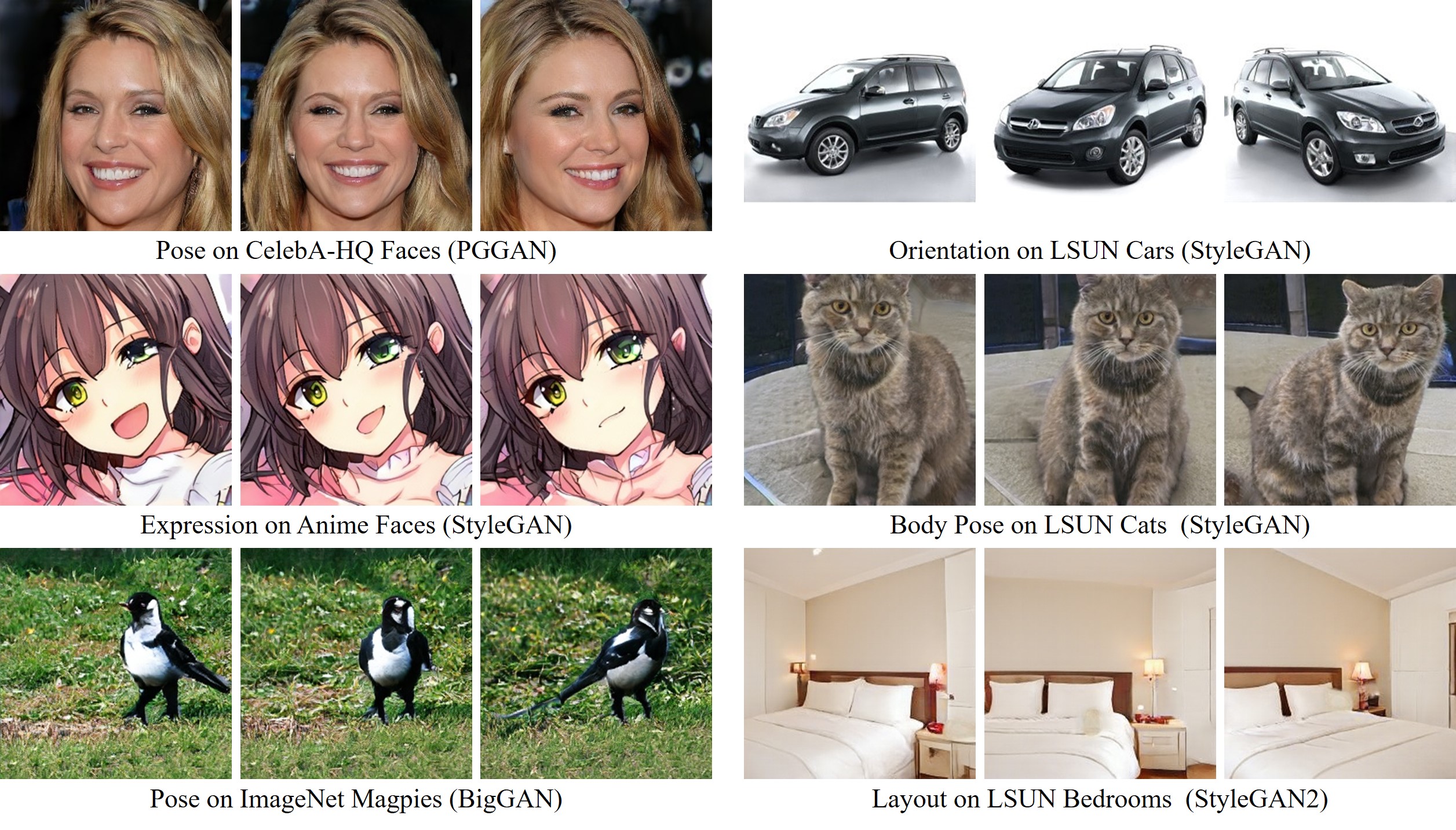

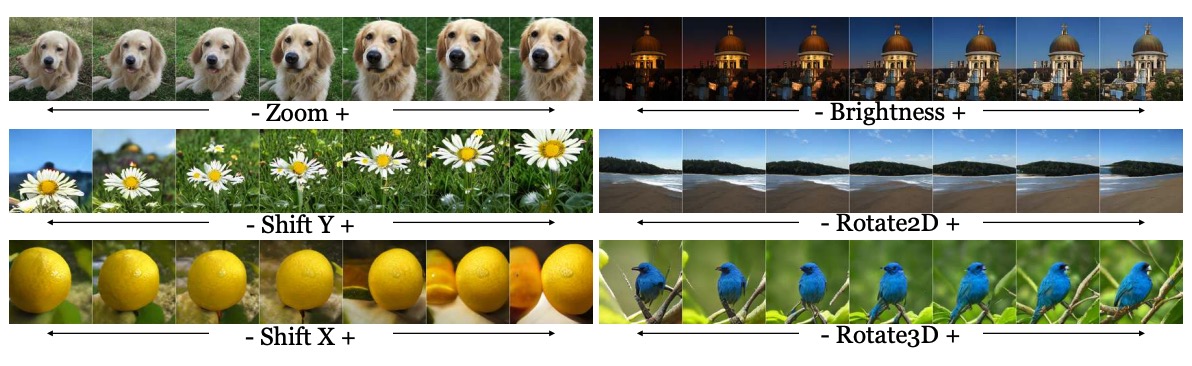

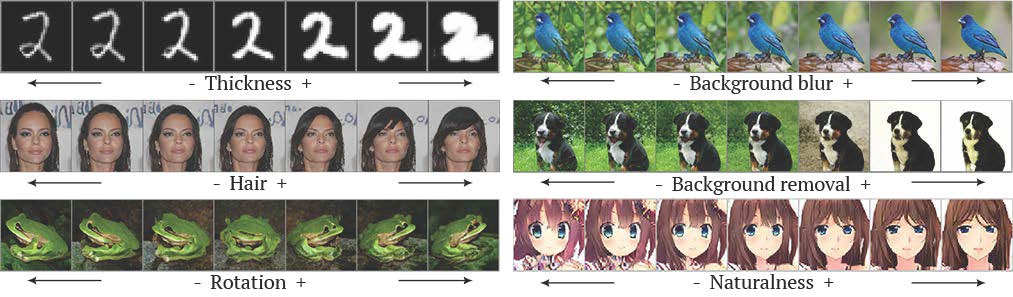

Anime Faces

| Pose | Mouth | Eye |

|

|

|

Cats

| Posture (Left & Right) | Posture (Up & Down) | Zoom |

|

|

|

Cars

| Orientation | Vertical Position | Shape |

|

|

|

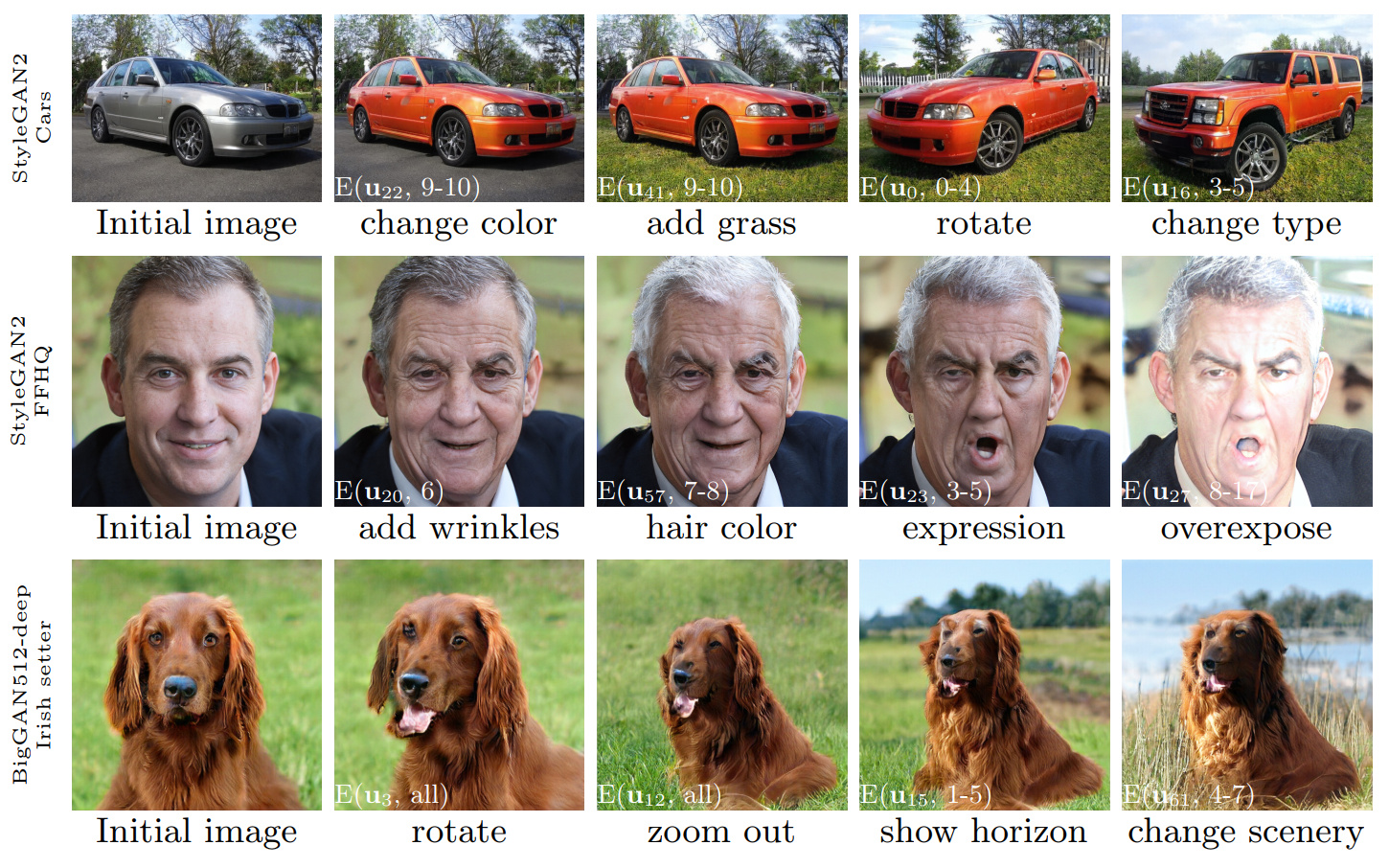

@inproceedings{shen2021closedform,

title = {Closed-Form Factorization of Latent Semantics in GANs},

author = {Shen, Yujun and Zhou, Bolei},

booktitle = {CVPR},

year = {2021}

}

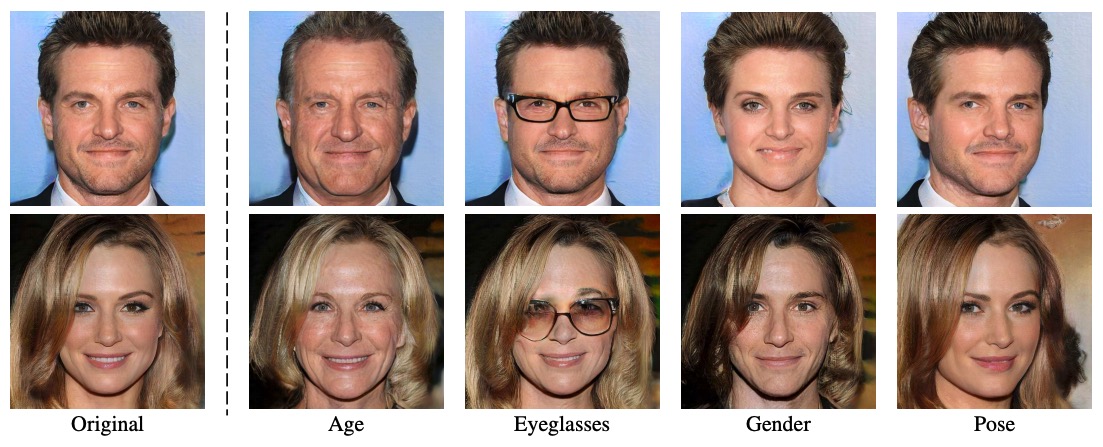

Comment: Interprets the face semantics emerging in the latent space of GANs with the help of off-the-shelf classifiers.