The Chinese University of Hong Kong

Discriminative Tasks

Indoor scene layout prediction

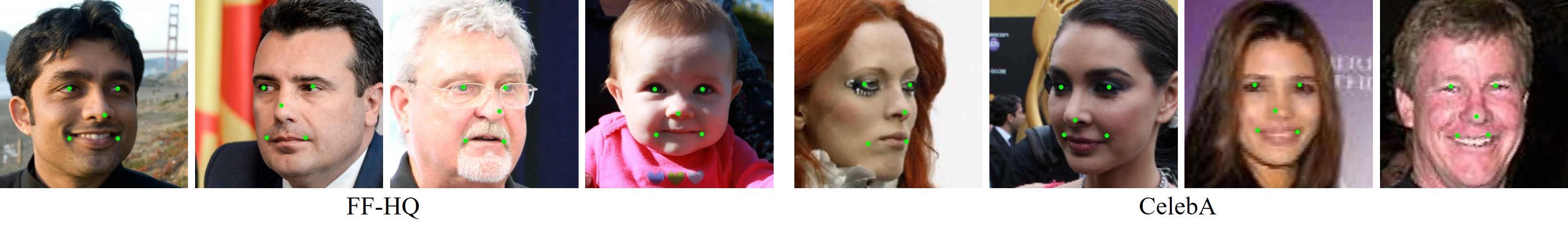

Facial landmark detection

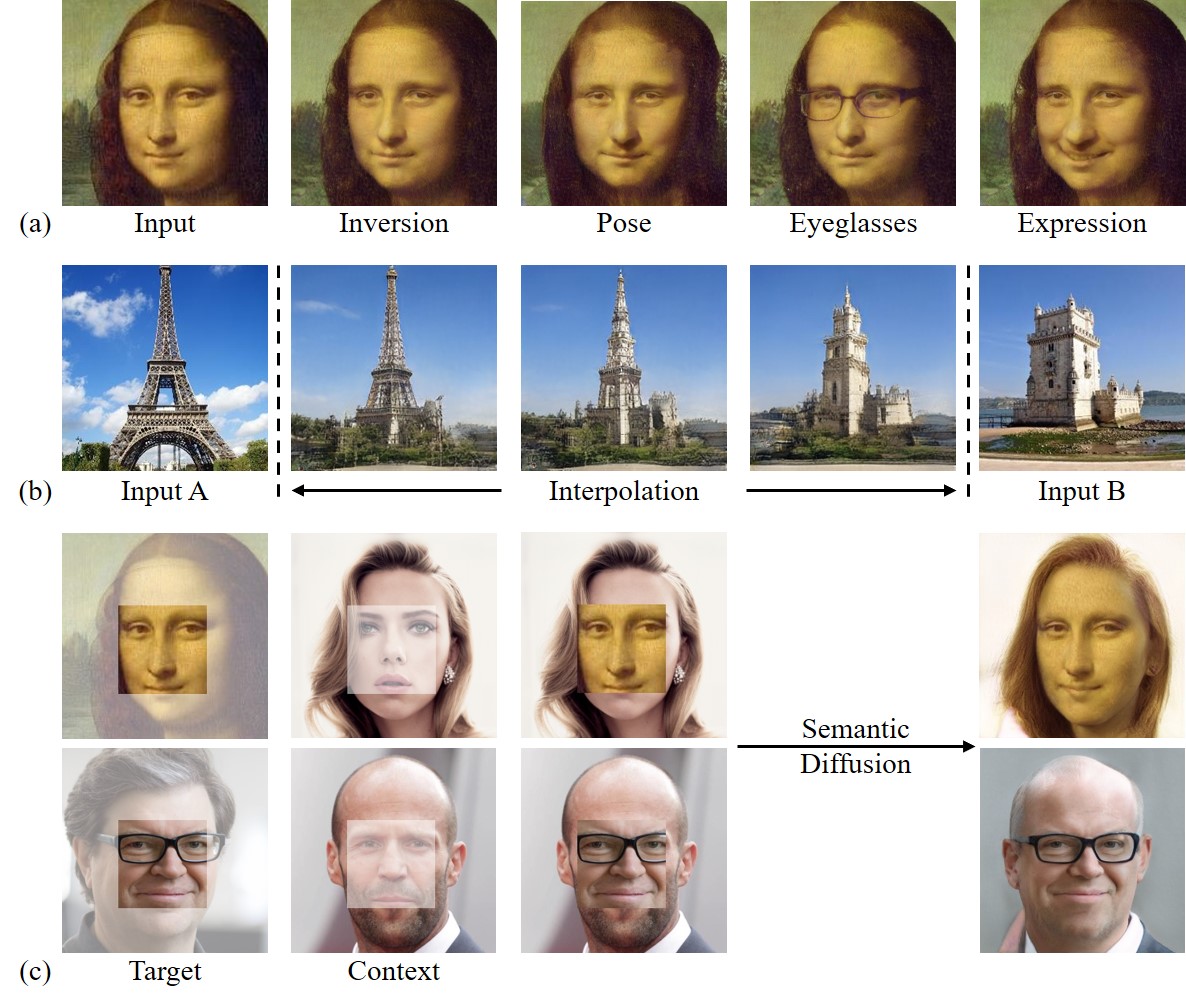

Generative Tasks

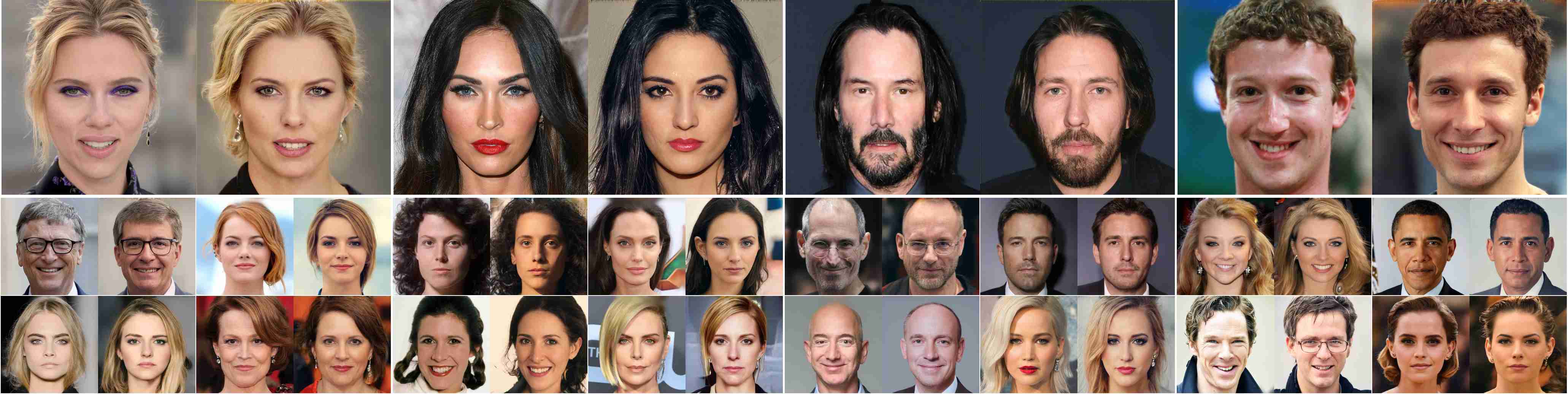

Image harmonization

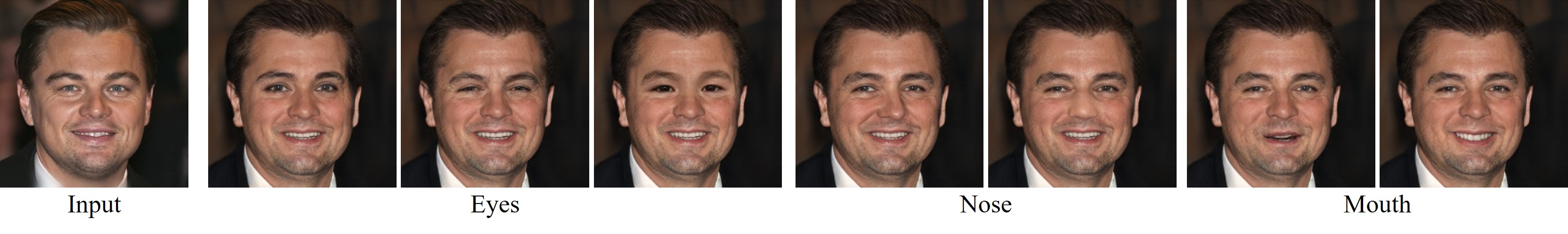

Local Editing

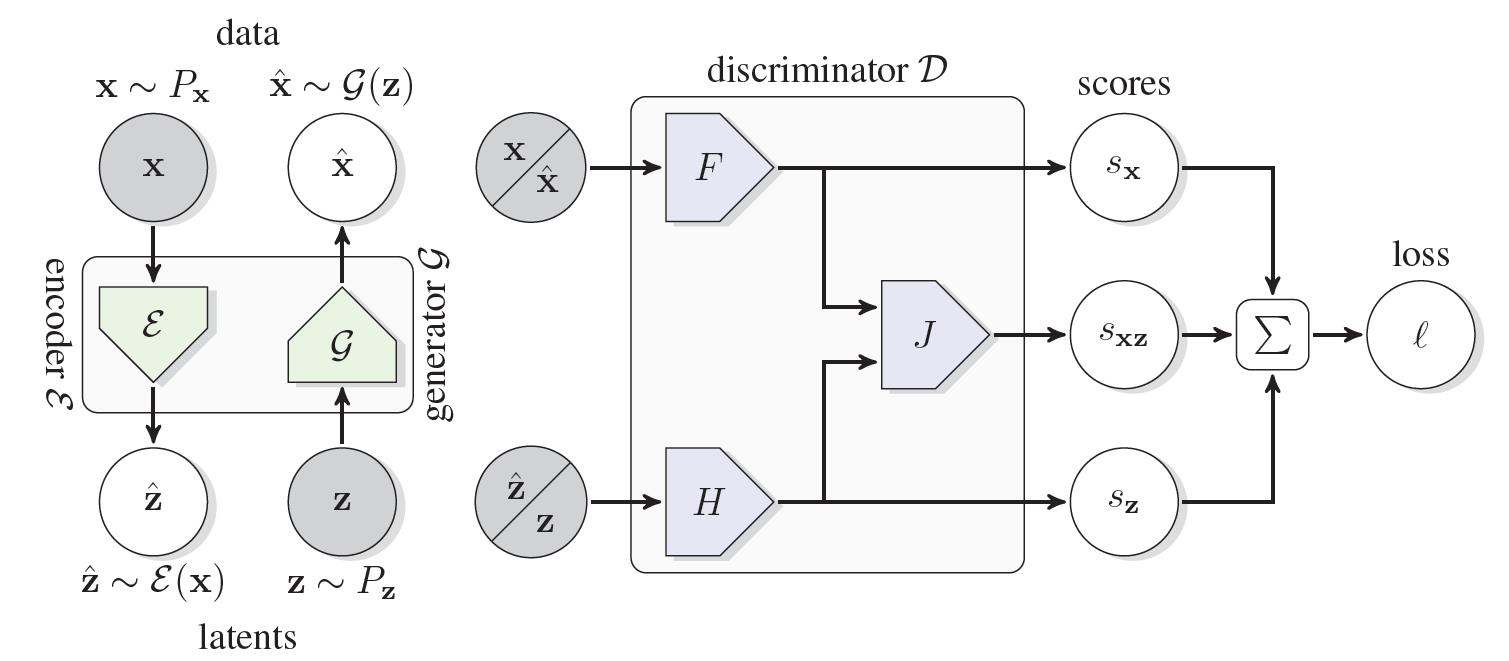

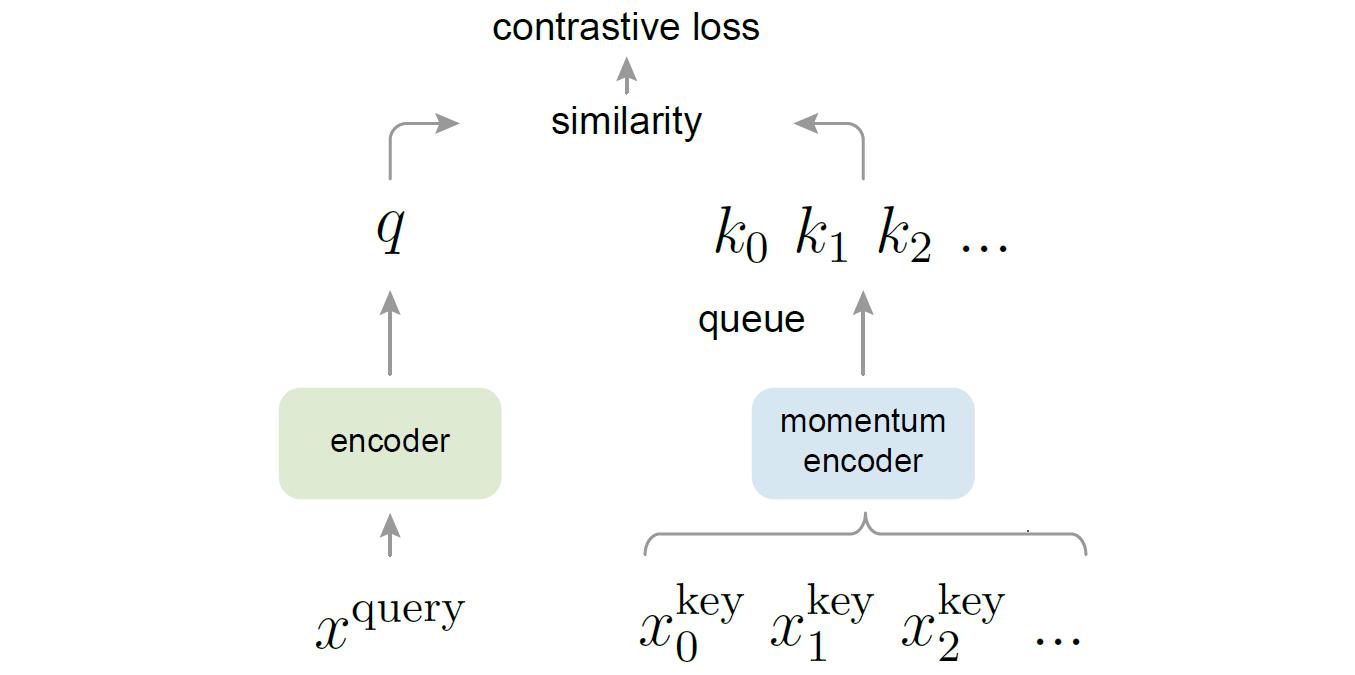

@inproceedings{xu2021generative,

title = {Generative Hierarchical Features from Synthesizing Images},

author = {Xu, Yinghao and Shen, Yujun and Zhu, Jiapeng and Yang, Ceyuan and Zhou, Bolei},

booktitle = {CVPR},

year = {2021}

}

Comment: Proposes style-based generator for high-quality image synthesis.