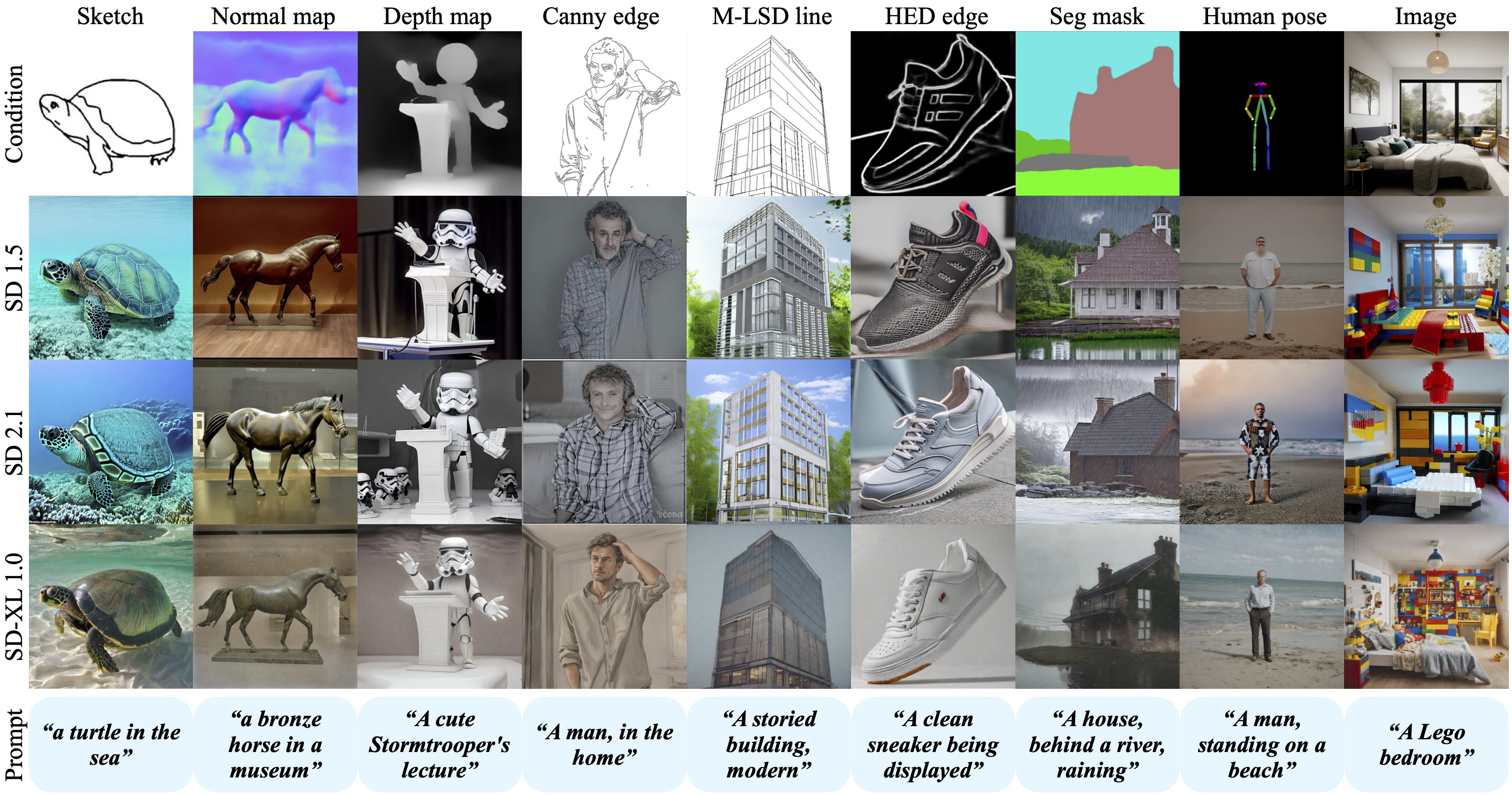

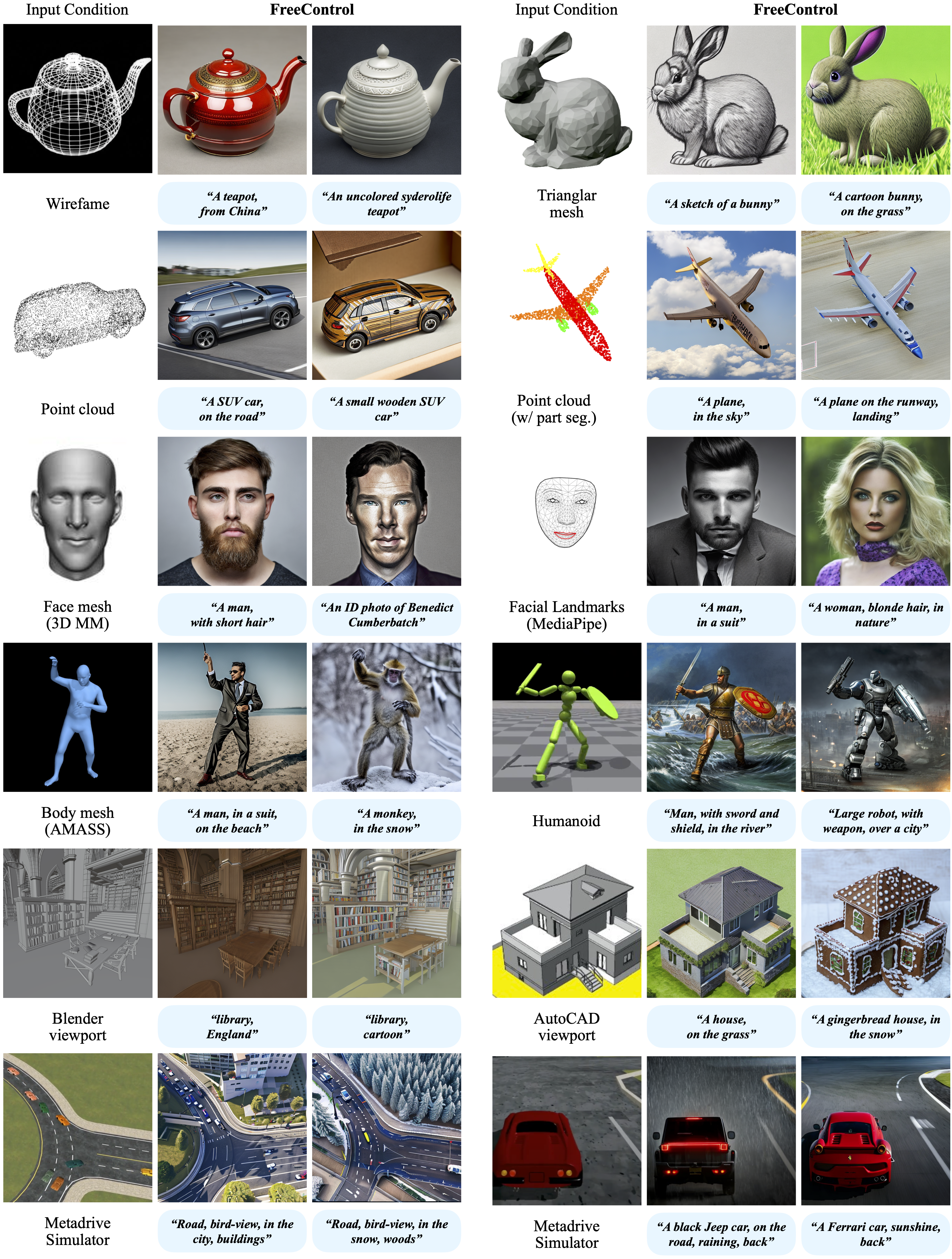

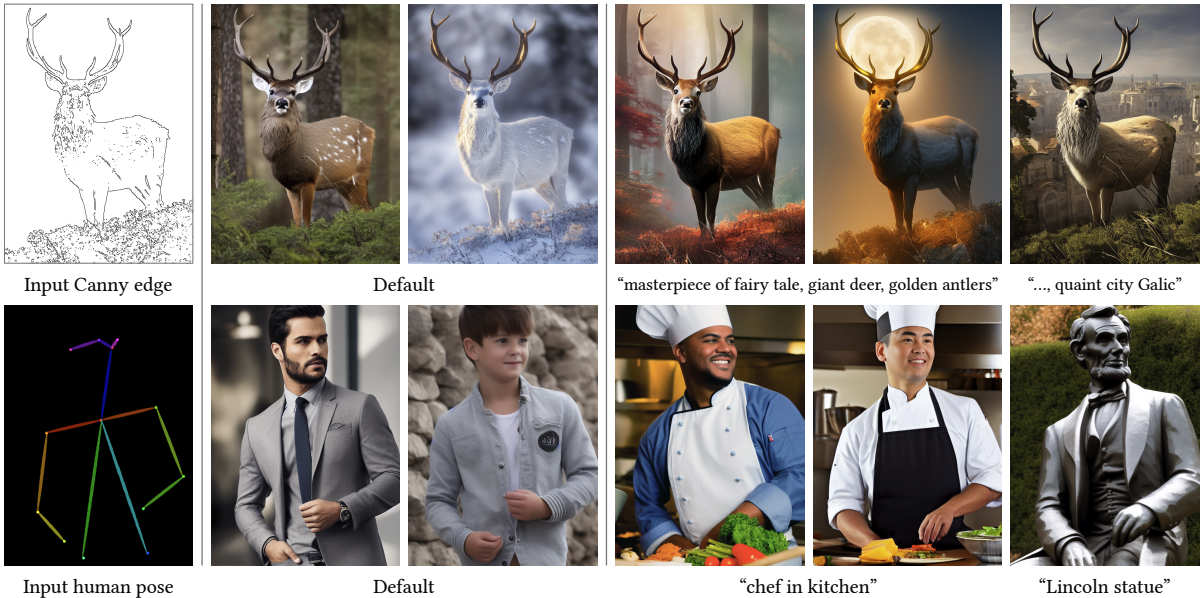

In this work, we present FreeControl, a training-free approach for controllable T2I

generation that supports multiple conditions, architectures, and checkpoints simultaneously.

FreeControl designs structure guidance to facilitate the structure alignment with a guidance image, and appearance guidance to enable the

appearance sharing between images generated using the same seed.

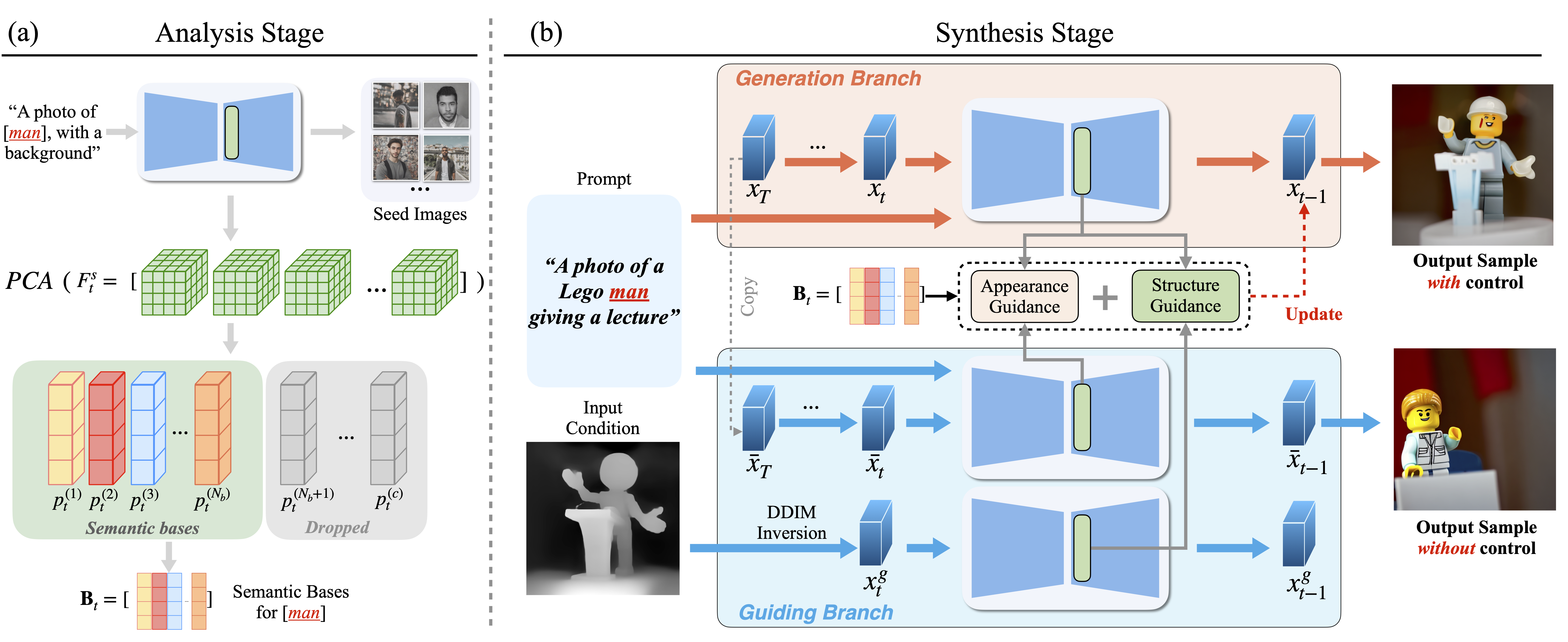

FreeControl combines an analysis stage and a synthesis stage. In the analysis stage, FreeControl queries a

T2I model to generate as few as one seed image and then constructs a linear feature subspace from the generated images.

In the synthesis stage, FreeControl employs guidance in the subspace to facilitate structure alignment with a guidance

image, as well as appearance alignment between images generated with and without control.

Comment: Builds a addition encoder to add spatial conditioning controls to T2I diffusion models.