3 Ant Group, 4 University of California, Los Angeles

** Generated videos from our methods.

** Generated videos from our methods.

Texture sticking appears in videos generated by StyleGAN-V, where texture sticks to fixed coordinates.

To give a better illustration, we track the pixels at certain coordinates as the video continues and the brush effect in the left part of the following video indicates that these pixels actually move little.

In contrast, our approach achieves smoother frame transition.

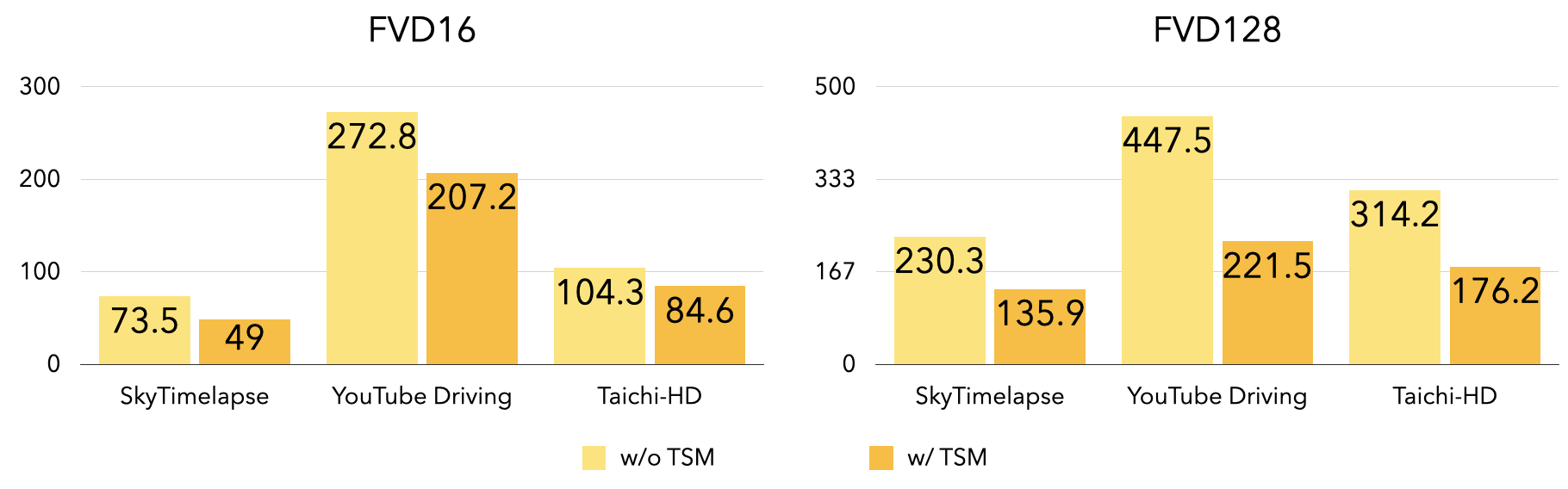

We incorporate Temporal Shift Module (TSM) in the discriminator to perform explicit temporal reasoning.

As shown in the following chart, the performance is greatly improved with TSM module. (For FVD16 and FVD128, a lower number indicates better result.)

Jittering phenomenon exists in StyleGAN-V. While our approach achieves much smoother results when generating long videos.

@article{zhang2022towards,

title={Towards Smooth Video Composition},

author={Zhang, Qihang and Yang, Ceyuan and Shen, Yujun and Xu, Yinghao and Zhou, Bolei},

journal={International Conference on Learning Representations (ICLR)},

year={2023}

}